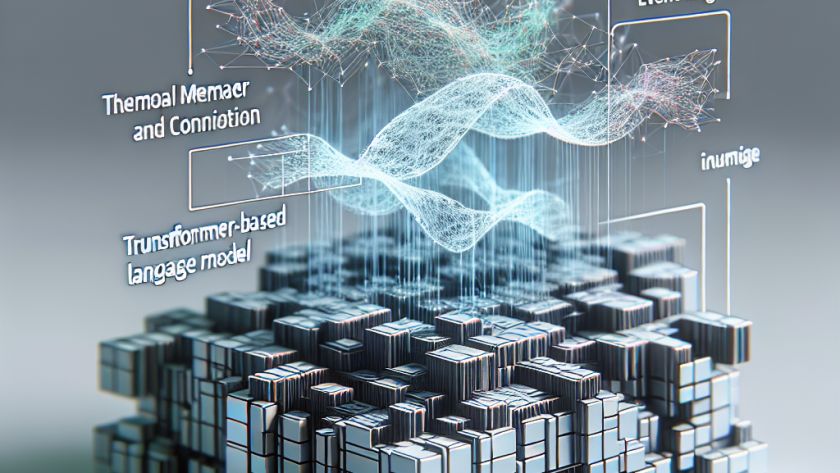

ChatGPT, an AI system by OpenAI, is making waves in the artificial intelligence field with its advanced language capabilities. Capable of performing tasks such as drafting emails, conducting research, and providing detailed information, such tools are transforming the way office tasks are conducted. They contribute to more efficient and productive workplaces. As with any technological…