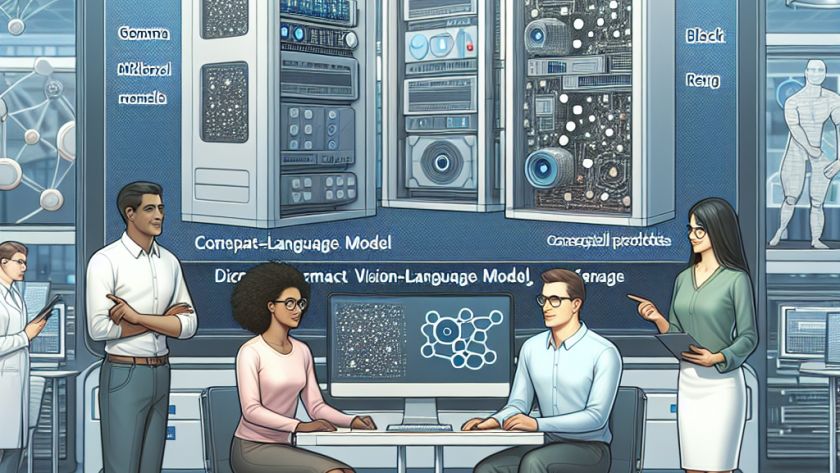

Visually rich documents (VRDs) such as invoices, utility bills, and insurance quotes present unique challenges in terms of information extraction (IE). The varied layouts and formats, coupled with both textual and visual properties, require complex, resource-intensive solutions. Many existing strategies rely on supervised learning, which necessitates a vast pool of human-labeled training samples. This not…