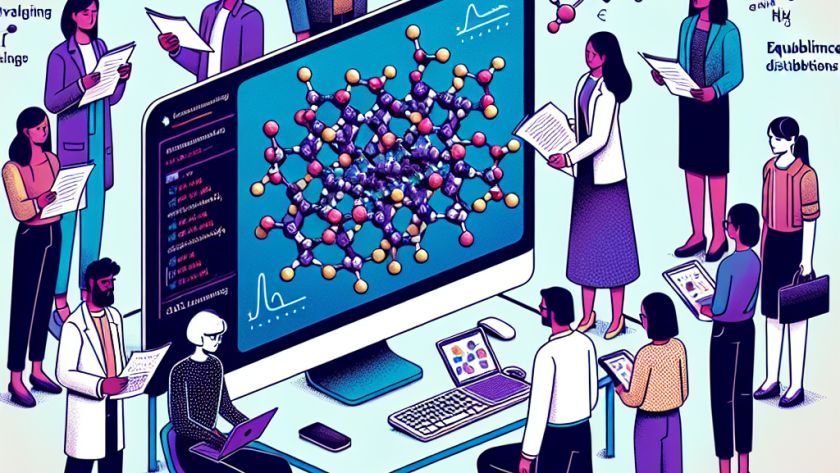

The growth and development of Large Language Models (LLMs) in Artificial Intelligence and Data Science hinge significantly on the volume and accessibility of training data. However, with the constant acceleration of data usage and the requirements of next-generation LLMs, concerns are brewing about the possibility of depleting global textual data reserves necessary for training these…