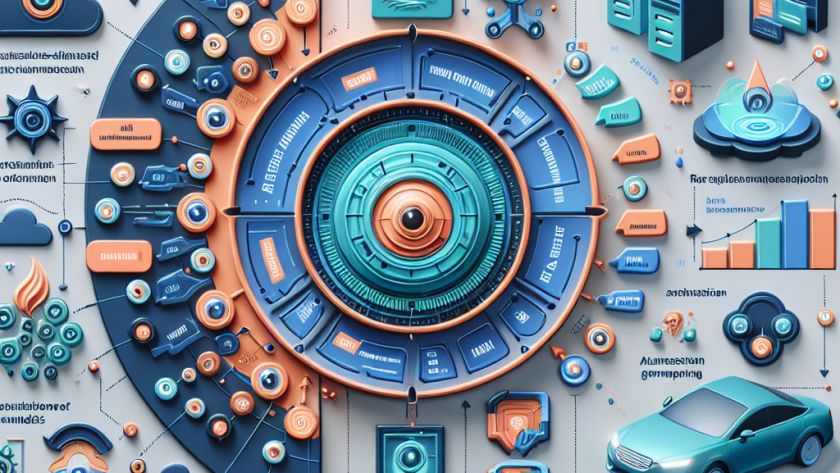

Deep learning is continuously evolving with attention mechanism playing an integral role in improving sequence modeling tasks. However, this method significantly bogs down computation with its quadratic complexity, especially in hefty long-context tasks such as genomics and natural language processing. Despite efforts to enhance its computational efficiency, existing techniques like Reformer, Routing Transformer, and Linformer…