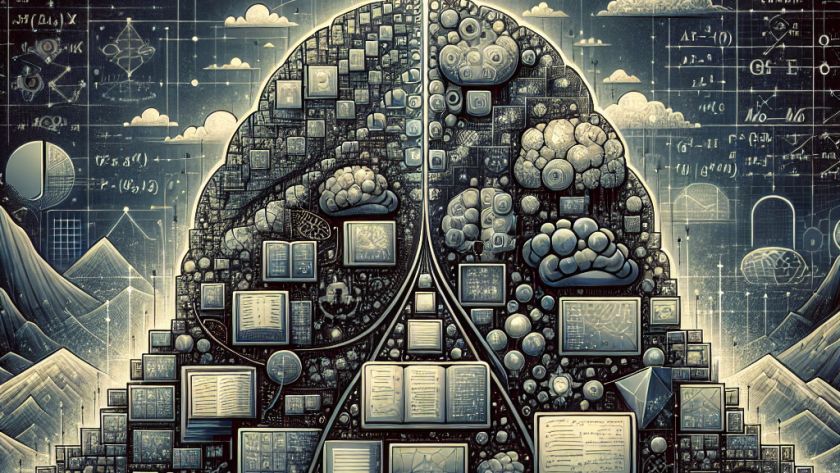

In the ever-evolving sphere of artificial intelligence, the study of large language models (LLMs) and how they interpret and process human language has provided valuable insights. Contrary to expectation, these innovative models represent concepts in a simple and linear manner. To demystify the basis of linear representations in LLMs, researchers from the University of Chicago…