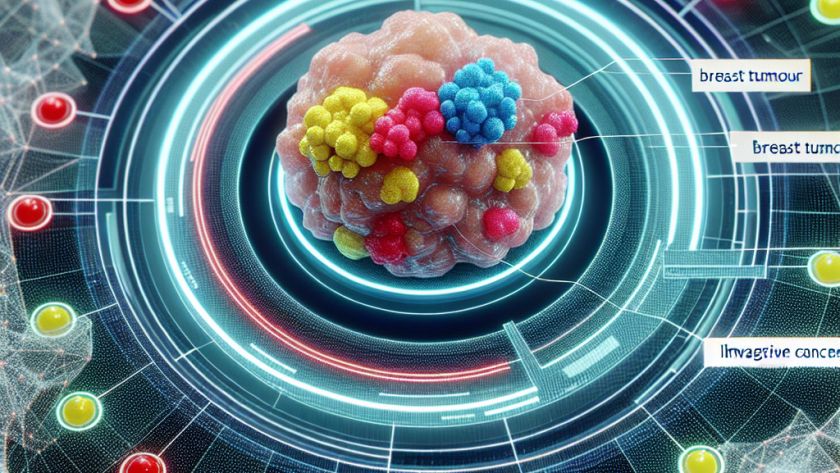

Ductal carcinoma in situ (DCIS), a type of tumor that can develop into an aggressive form of breast cancer, accounts for approximately 25% of all breast cancer diagnoses. DCIS can be challenging for clinicians to accurately categorize, leading to frequent overtreatment for patients. A team of researchers from the Massachusetts Institute of Technology (MIT) and…