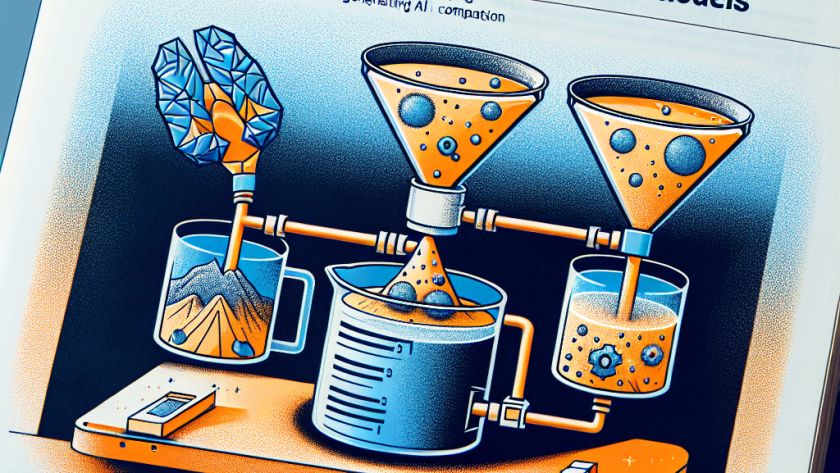

Artificial intelligence (AI) applications are growing expansive, with multi-modal generative models that integrate various data types, such as text, images, and videos. Yet, these models present complex challenges in data processing and model training and call for integrated strategies to refine both data and models for excellent AI performance.

Multi-modal generative model development has been plagued…