In June 2024, AI organization Databricks made three major announcements, capturing attention in the data science and engineering sectors. The company introduced advancements set to streamline user experience, improve data management, and facilitate data engineering workflows.

The first significant development is the new generation of Databricks Notebooks. With its focus on data-focused authoring, the Notebook…

Artificial intelligence (AI) with large language models (LLMs) have made major strides in several sophisticated applications, yet struggle with tasks that require complex, multi-step reasoning such as solving mathematical problems. Improving their reasoning abilities is vital for improving their efficiency on such tasks. LLMs often fail when dealing with tasks requiring logical steps and intermediate-step…

The evaluation of Large Language Models (LLMs) requires a systematic and multi-layered approach to accurately identify areas of improvement and limitations. As these models advance and become more intricate, their assessment presents greater challenges due to the diversity of tasks they are required to execute. Current benchmarks often employ non-precise, simplistic criteria such as "helpfulness"…

The Allen Institute for AI has recently launched the Tulu 2.5 suite, a revolutionary progression in model training employing Direct Preference Optimization (DPO) and Proximal Policy Optimization (PPO). The suite encompasses an array of models that have been trained on several datasets to augment their reward and value models, with the goal of significantly enhancing…

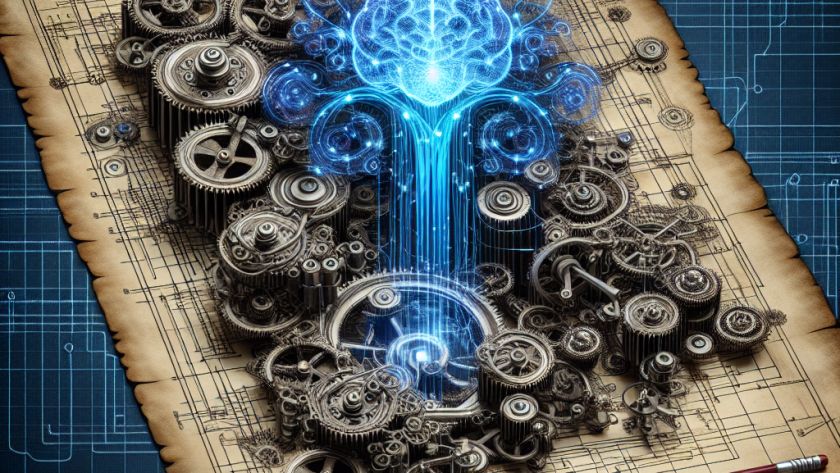

DeepMind researchers have presented TransNAR, a new hybrid architecture which pairs the language comprehension capabilities of Transformers with the robust algorithmic abilities of pre-trained graph neural networks (GNNs), known as neural algorithmic reasoners (NARs. This combination is designed to enhance the reasoning capabilities of language models, while maintaining generalization capacities.

The routine issue faced by…

With their capacity to process and generate human-like text, Large Language Models (LLMs) have become critical tools that empower a variety of applications, from chatbots and data analysis to other advanced AI applications. The success of LLMs relies heavily on the diversity and quality of instructional data used for training.

One of the operative challenges in…

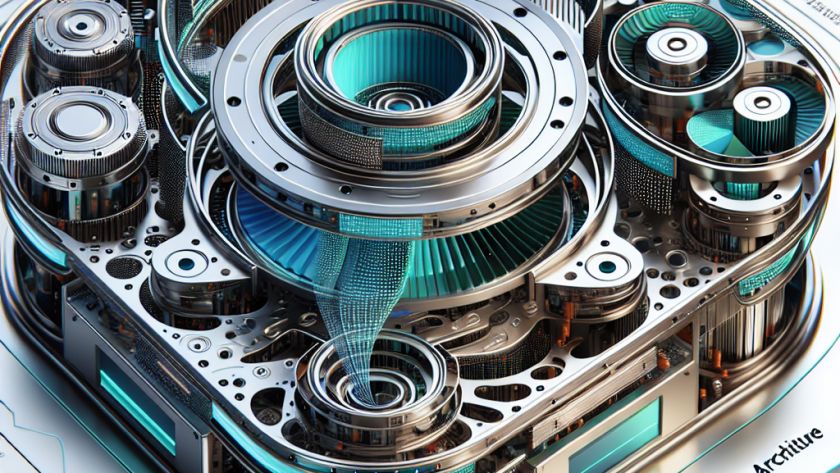

Large Language Models (LLMs) are crucial for a variety of applications, from machine translation to predictive text completion. They face challenges, including capturing complex, long-term dependencies and enabling efficient large-scale parallelisation. Attention-based models that have dominated LLM architectures struggle with computational complexity and extrapolating to longer sequences. Meanwhile, State Space Models (SSMs) offer linear computation…