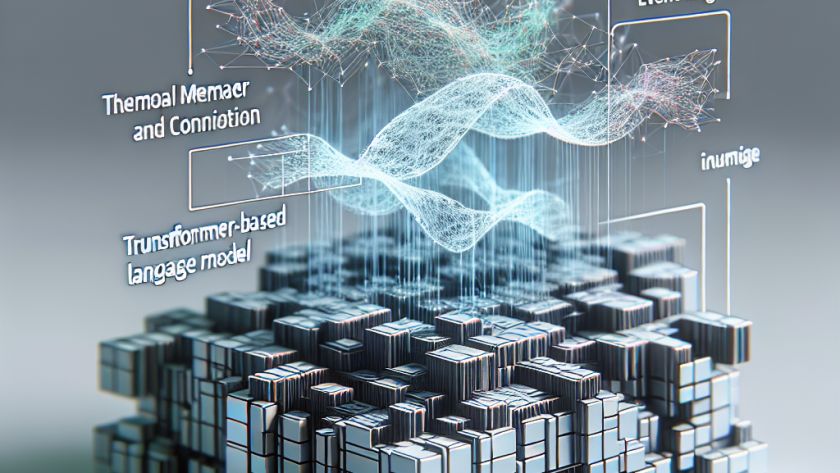

Large Language Models (LLMs) have become increasingly important in AI and data processing tasks, but their superior size leads to substantial memory requirements and bandwidth consumption. Standard procedures such as Post-Training Quantization (PTQ) and Quantized Parameter-Efficient Fine-Tuning (Q-PEFT) can often compromise accuracy and performance, and are impractical for larger networks. To combat this, researchers have…