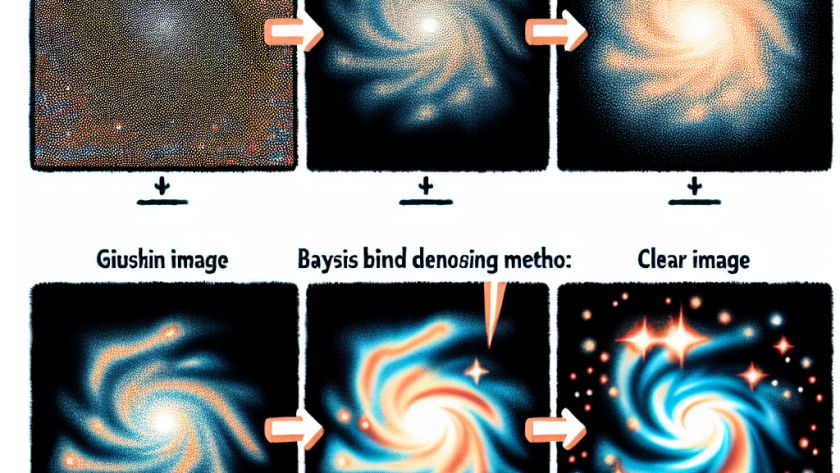

The advancement of deep generative models has brought new challenges in denoising, specifically in blind denoising where noise level and covariance are unknown. To tackle this issue, a research team from Ecole Polytechnique, Institut Polytechnique de Paris, and Flatiron Institute developed a novel method called the Gibbs Diffusion (GDiff) approach.

The GDiff approach is a fresh…