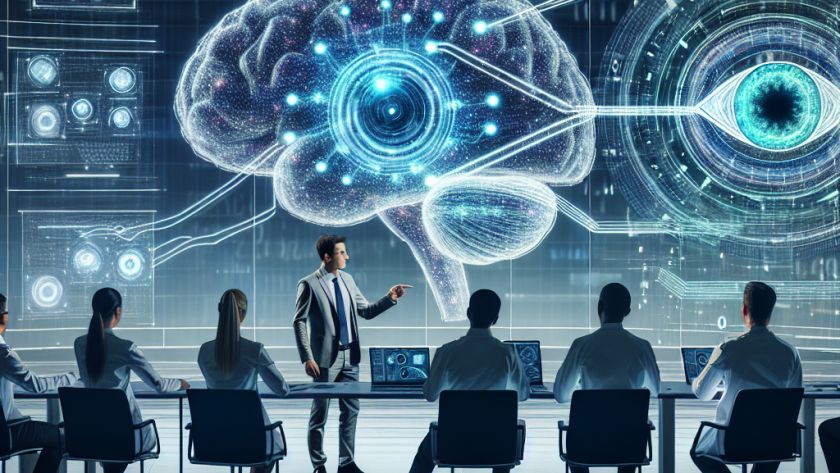

Computer vision, the field dealing with how computers can gain understanding from digital images or videos, has seen remarkable growth in recent years. A significant challenge within this field is the precise interpretation of intricate image details, understanding both global and local visual cues. Despite advances with conventional models like Convolutional Neural Networks (CNNs) and…