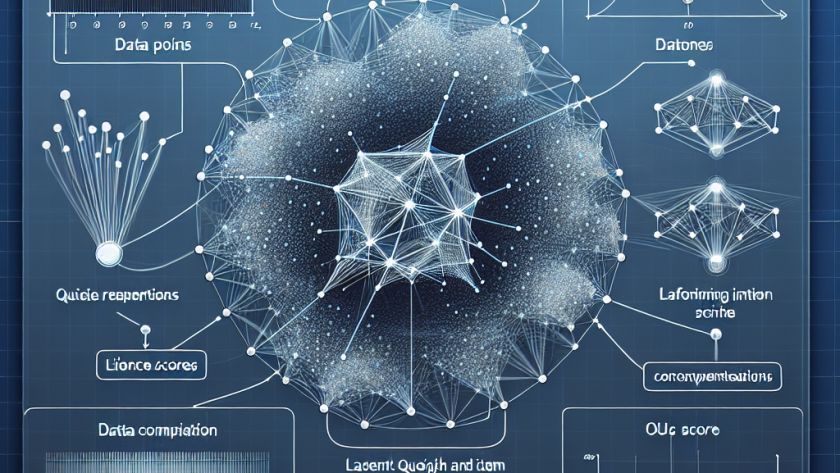

Language modeling, a key aspect of machine learning, aims to predict the likelihood of a sequence of words. Used in applications such as text summarization, translation, and auto-completion systems, it greatly improves the ability of machines to understand and generate human language. However, processing and storing large data sequences can present significant computational and memory…