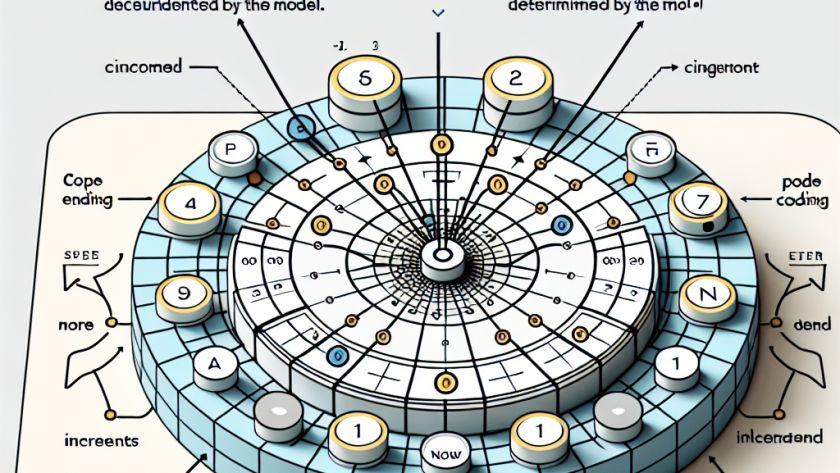

Text, audio, and code sequences depend on position information to decipher meaning. Large language models (LLMs) such as the Transformer architecture do not inherently contain order information and regard sequences as sets. The concept of Position Encoding (PE) is used here, assigning a unique vector to each position. This approach is crucial for LLMs to…