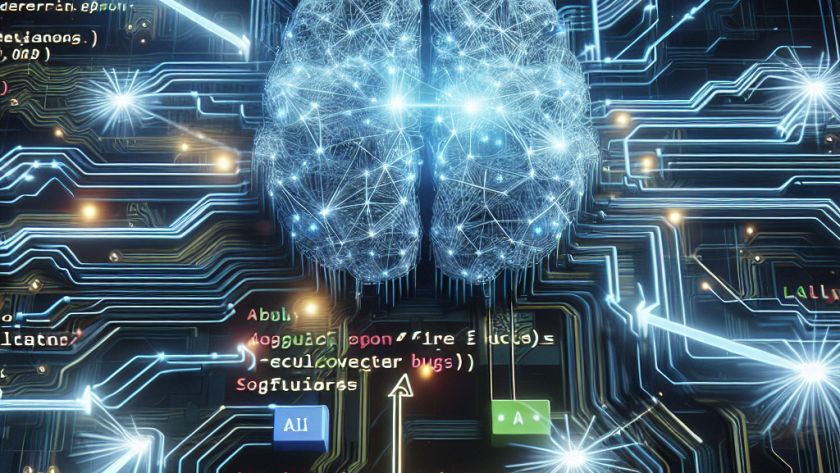

Large Language Models (LLMs) are effectively used as task assistants, retrieving essential information to satisfy users' requests. However, a common problem experienced with LLMs is their tendency to provide erroneous or 'hallucinated' responses. Hallucination in LLMs refers to the generation of information that is not based on actual data or knowledge received during the model's…